Key Takeaways

We built Accelerated File Transfer Protocol (AFTP) because standard protocols couldn't keep up with what businesses actually needed, moving massive files without the constant headaches of timeouts and failed transfers.

• SFTP and HTTPS hit a wall: TCP windowing constraints meant these protocols choked on terabyte transfers, especially over long distances. What should have taken hours stretched into days (or just failed completely).

• AFTP Was Originally Developed in Early 2007: bTrade engineers created the first Accelerated File Transfer Protocol (AFTP) prototype to overcome the limitations of traditional TCP-based file transfer methods.

• UDP changed everything: By building AFTP on UDP instead of TCP, we could actually use the bandwidth that was sitting there unused and up to 100% of it. Some customers saw 100x faster delivery compared to their old methods.

• AFTP Entered Limited Enterprise Release in 2008: Early enterprise deployments validated major improvements in performance, reliability, and operational efficiency.

• The First Major Media Deployment Happened in 2009: Media organizations used AFTP to move massive production files globally with dramatically faster delivery speeds.

• Banking and eDiscovery Expansion Followed in 2011: Financial institutions adopted AFTP to support secure movement of large compliance, legal, and regulatory datasets.

• From media to manufacturing and beyond: What started as a solution for moving massive video files now handles everything from bank compliance data to eDiscovery datasets, to hybrid MFT replication, across industries worldwide.

• Workflows, not just transfers: TDXchange turns AFTP into governed business processes with real-time visibility and compliance controls baked in.

The result? Large file movement went from being an operational risk that kept people up at night to something you could actually depend on.

Here's the thing: accelerated file transfer didn't exist until we built it. Back in the early 2000s, organizations kept running into the same brick wall. Media companies watched video files crawl across networks for days. Banks couldn't move compliance data fast enough to meet regulatory deadlines. Everyone was stuck with SFTP and HTTPS protocols that were fine for smaller files but completely fell apart when you needed to move terabytes.

We realized this wasn't a problem you could patch. It required starting over. So we engineered what became the Accelerated File Transfer Protocol (AFTP), and it completely changed how businesses think about moving massive datasets. This is the story of how we got there, the technical breakthroughs that made it possible, the challenges we hit along the way, and how AFTP became something industries now depend on.

When File Transfer Hit the Wall

The Problem Nobody Wanted to Talk About

Media companies were waiting days for movie files that should have moved in hours. Banking institutions watched compliance deadlines slip by while massive datasets crawled across networks at speeds that made dial-up look competitive. File sizes weren't getting smaller, quite the opposite.

At bTrade, we were fielding calls from frustrated production teams who couldn't predict when critical assets would arrive. One media client told us, "We're planning shoots around file transfer times instead of creative schedules. That's backwards."

Banking clients faced equally pressing challenges. During eDiscovery processes, they collected terabytes of emails, documents, and transaction records that needed to reach legal firms, auditors, and regulators across the globe. Time-sensitive compliance requirements left zero room for the kind of delays that had become routine with traditional methods.

The Real Cost of Transfer Failures

Production teams stopped trusting delivery timelines. Manual retries became standard procedure rather than emergency backup. IT departments spent more time babysitting file transfers than managing actual infrastructure.

But the deeper issue wasn't operational, it was architectural. SFTP and HTTPS transfers would start strong, then degrade or fail entirely when moving large datasets over long distances. Network conditions that barely affected web browsing would bring terabyte transfers to their knees.

Banking clients watched SFTP connections timeout during critical eDiscovery workflows, putting regulatory compliance at risk. Media companies saw high-priority content deliveries fail at the worst possible moments.

Why Traditional Protocols Couldn't Keep Up

The problem lived at the protocol level. SFTP and HTTPS were built on TCP, which imposed fundamental constraints through windowing and latency limitations. These protocols simply couldn't utilize available network bandwidth efficiently when dealing with bulk data movement.

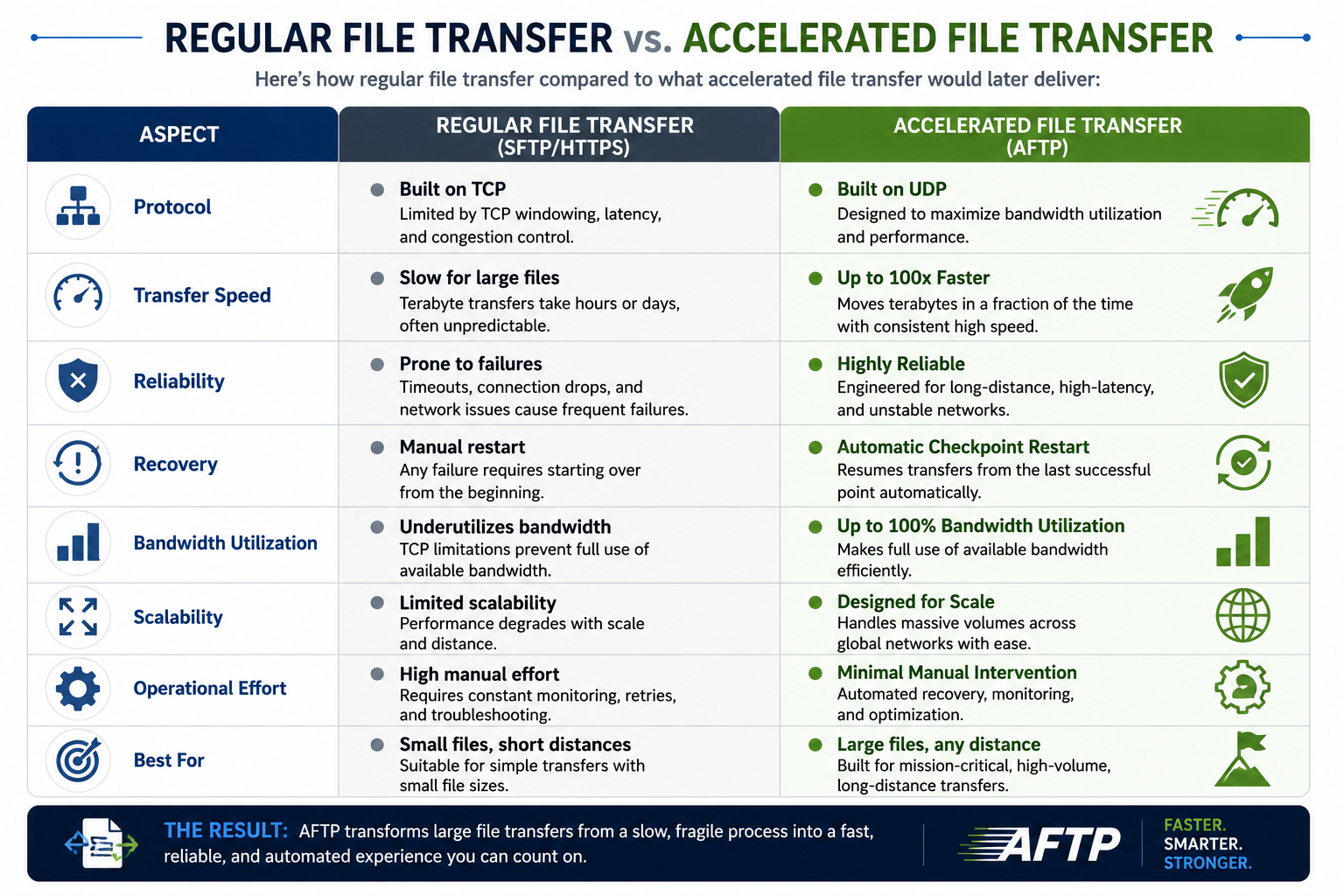

Here's how regular file transfer compared to what Accelerated File Transfer Protocol would later deliver:

Building AFTP: Rethinking File Movement from Scratch

Starting with Real Customer Needs

Our media customers had a simple request that wasn't simple at all: move massive movie files worldwide without the delays and failures that came standard with existing methods. Rather than trying to patch the limitations of SFTP and HTTPS, we decided to start over.

This wasn't about incremental improvements. It was about admitting that the protocols everyone relied on simply weren't built for the bulk data movement requirements that had become the new normal.

The Technical Breakthrough

The answer came from abandoning TCP entirely. We built AFTP on UDP, which let us intelligently utilize available network bandwidth up to 100% (or whatever percentage made sense for the specific environment). This protocol-level change eliminated the TCP Windowing and latency constraints that made traditional methods so unpredictable.

Making It Work in the Real World

AFTP needed a home, and that home became TDXchange. Our MFT platform provided the orchestration and governance layer that turned bulk file movement from an ad-hoc task into a managed workflow. Real-time monitoring meant visibility into what was happening. Security controls meant consistent protection. Bottleneck identification meant problems got solved instead of ignored.

The result: organizations could treat large file transfers as predictable business processes rather than operational risks.

Security Built In, Not Bolted On

We embedded security into AFTP from day one. End-to-end encryption protected data in transit and at rest. Role-based access controls determined who could initiate or receive transfers. Authentication mechanisms verified everyone involved.

The audit logs proved particularly valuable, every transfer event got recorded, creating tamper-resistant records that satisfied compliance and chain-of-custody requirements in regulated industries. Because when you're moving sensitive data across borders and through multiple hands, "trust us" isn't good enough.

The Evolution of AFTP: From Prototype to Enterprise Platform

Early 2007: The First AFTP Prototype

In early 2007, bTrade engineers began developing the first prototype of Accelerated File Transfer Protocol (AFTP).

The goal was clear:

Create a transfer protocol capable of moving massive datasets reliably and predictably across global enterprise networks.

Rather than attempting to optimize traditional TCP-based protocols, the engineering team chose a different path entirely.

AFTP was designed around UDP-based transfer architecture combined with:

- intelligent bandwidth utilization

- parallel transfer optimization

- checkpoint restart functionality

- resiliency mechanisms

- enterprise workflow integration

This approach eliminated many of the limitations that constrained traditional file transfer methods.

2008: Limited Enterprise Release

By 2008, bTrade introduced a limited release of AFTP into select enterprise environments.

These early deployments focused on validating:

- transfer performance

- resiliency

- operational stability

- scalability

- recovery automation

Enterprise customers quickly observed major improvements compared to traditional methods.

Large file transfers that previously took days could now complete dramatically faster while maintaining reliability across long-distance networks.

This phase also allowed bTrade engineers to refine:

- throughput optimization

- monitoring capabilities

- automated recovery

- operational visibility

- workflow orchestration

The limited release phase proved accelerated file transfer could reliably operate at enterprise scale.

2009: First Major Media Industry Deployment

In 2009, AFTP entered its first major deployment within the media and entertainment industry.

Media organizations faced enormous pressure to move:

- production video files

- post-production assets

- broadcast content

- digital film libraries

- large creative datasets

Traditional protocols often required days to transfer files globally.

Production teams struggled with:

- unreliable delivery windows

- failed transfers

- operational delays

- missed deadlines

AFTP dramatically changed this environment.

By leveraging UDP-based acceleration and intelligent bandwidth utilization, media companies achieved:

- significantly faster delivery

- improved reliability

- predictable transfer performance

- reduced operational overhead

For many organizations, this represented the first time large-scale global file movement became operationally dependable.

How We Built AFTP: The Technical Story

Breaking Free from TCP Constraints

We built AFTP on UDP instead of TCP, which was the breakthrough that changed everything. While TCP imposes windowing constraints and latency limitations, UDP gave us the freedom to intelligently utilize available network bandwidth, up to 100% or any configurable percentage that makes sense for your environment.

The AFTP protocol we created operates at the network level, optimizing every aspect of data transmission for sustained bulk movement. Because enterprise environments don't have the luxury of average-case performance, we designed AFTP to maintain consistent throughput regardless of file size or network load.

Solving the Distance Problem

Long-distance transfers expose exactly where traditional protocols break down. AFTP handles latency through multiple mechanisms working together, maintaining predictable performance across geographic distances that would cripple SFTP or HTTPS transfers.

Our media customers saw up to 100 times faster delivery of production assets compared to their previous methods. Banking clients moved massive eDiscovery datasets in hours instead of days, meeting regulatory timelines that were previously touch-and-go.

The technical architecture isn't complicated, it's just different. We designed it to work the way enterprises actually need to move data: reliably, quickly, and without constant intervention.

When One Solution Became an Industry Standard

Media Companies Showed Us the Way

Media production companies became our testing ground in 2009, though "testing" might be too gentle a word. These organizations needed to move massive movie files across continents without the delays and failures that had become their daily reality. When we delivered up to 100 times faster transfer speeds for production assets, something shifted.

"We went from crossing our fingers and hoping files would arrive to actually planning production schedules around reliable delivery," one post-production manager told us. TDXchange handled the workflow orchestration while AFTP moved the heavy lifting. What had been a constant operational risk became a controlled capability.

Banking Clients Brought New Challenges

Banking clients already using TDXchange approached us with a different problem altogether in 2011. Their eDiscovery processes were drowning in timeouts when SFTP and HTTPS couldn't handle massive datasets bound for legal firms and regulators.

We suggested the Accelerated File Transfer Protocol hat was already proving itself in media workflows. The results spoke for themselves: banks moved enormous datasets in hours instead of days, meeting regulatory timelines across the USA, European countries, and Asian regions that had previously put them at compliance risk.

Beyond Single Industries

The accelerated file transfer client outgrew its original purpose. We found ourselves applying the same blueprint across media, banking, and manufacturing environments. The pattern remained consistent, TDXchange orchestrates and governs workflows while AFTP handles sustained bulk data movement. Organizations could design for peak demand rather than average load, eliminating most human intervention through automation.

Conclusion

We built AFTP because traditional protocols failed to meet enterprise demands for large file transfers. What started as a solution for media companies evolved into an industry standard across banking, eDiscovery, and manufacturing. Actually, the technical breakthrough came from rethinking bulk data movement entirely rather than patching existing limitations. Organizations now transfer terabyte-scale datasets reliably and quickly, with sustained performance that was impossible before. Our accelerated file transfer protocol transformed what was once a constant operational risk into a controlled, predictable capability.

About the Author

Andrei Olin is Chief Technology Officer at bTrade, where he leads product strategy, delivery, and security across the company’s B2B, Managed File Transfer (MFT), and security platforms. He brings over 30 years of experience in enterprise technology, including designing and operating mission-critical MFT and messaging platforms for global financial institutions such as Merrill Lynch and Deutsche Bank. Andrei holds Master’s and Bachelor’s degrees in Information Technology with a focus on Information Security.

FAQs

What is the fastest protocol for transferring large files? Accelerated File Transfer Protocol (AFTP) is engineered specifically for high-speed bulk data movement. Built on UDP rather than TCP, it intelligently utilizes up to 100% of available network bandwidth and maintains consistent throughput regardless of file size or geographic distance, making it significantly faster than traditional protocols for large file transfers.

When was AFTP first developed?

bTrade developed the first AFTP prototype in early 2007. A limited enterprise release followed in 2008, with major deployments in media workflows in 2009 and banking eDiscovery environments in 2011.

Why can't SFTP and HTTPS handle large file transfers efficiently? SFTP and HTTPS are built on TCP, which imposes fundamental constraints through windowing and latency limitations. These protocols cannot utilize available network bandwidth efficiently when transferring very large datasets over long distances, resulting in slow performance, timeouts, and failures that make them unsuitable for enterprise-scale bulk data movement.

How does accelerated file transfer handle network interruptions? Accelerated file transfer software includes automatic checkpoint restart functionality. When network disruptions occur, the system automatically resumes from the last successful checkpoint without requiring manual intervention or restarting the entire transfer, which is especially valuable when moving terabyte-scale datasets.

What industries benefit most from accelerated file transfer technology? Media production companies, banking institutions, eDiscovery operations, and manufacturing environments all benefit significantly. Media companies transfer massive video files worldwide, banks move large datasets for compliance and regulatory requirements, and eDiscovery processes handle enormous volumes of documents and records across global jurisdictions.

How much faster is accelerated file transfer compared to traditional methods? Organizations using accelerated file transfer technology have achieved up to 100 times faster delivery compared to traditional SFTP and HTTPS methods. Banking clients reduced transfer times from days to hours, while media companies gained predictable delivery timelines for production assets that previously experienced constant delays and failures.

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "How bTrade Invented Accelerated File Transfer: The Origin Story Behind Fast Large File Transfers",

"description": "Discover how bTrade invented AFTP to solve massive file transfer failures. From media to banking, learn why UDP-based protocol delivers 100x faster speeds.",

"author": {

"@type": "Organization",

"name": "bTrade",

"url": "https://www.btrade.com"

},

"publisher": {

"@type": "Organization",

"name": "bTrade",

"logo": {

"@type": "ImageObject",

"url": "https://www.btrade.com/logo.png"

}

},

"datePublished": "2024-12-19",

"dateModified": "2024-12-19",

"mainEntityOfPage": {

"@type": "WebPage",

"@id": "www.btrade.com/blogs/accelerated-file-transfer-protocol"

},

"image": {

"@type": "ImageObject",

"url": "https://www.btrade.com/images/accelerated-file-transfer-visualization.jpg",

"width": 1200,

"height": 630

},

"articleSection": "Technology Innovation",

"keywords": [

"accelerated file transfer",

"AFTP",

"accelerated file transfer protocol",

"large file transfer",

"fast file transfer",

"UDP file transfer",

"bTrade innovation",

"TDXchange",

"managed file transfer",

"enterprise file transfer"

],

"about": [

{

"@type": "Thing",

"name": "Accelerated File Transfer Protocol",

"description": "UDP-based protocol for high-speed bulk data movement"

},

{

"@type": "Thing",

"name": "File Transfer Technology",

"description": "Enterprise solutions for moving large datasets"

},

{

"@type": "SoftwareApplication",

"name": "TDXchange",

"applicationCategory": "Managed File Transfer Platform",

"operatingSystem": "Cross-platform"

}

],

"mentions": [

{

"@type": "Thing",

"name": "SFTP",

"description": "Secure File Transfer Protocol"

},

{

"@type": "Thing",

"name": "HTTPS",

"description": "Hypertext Transfer Protocol Secure"

},

{

"@type": "Thing",

"name": "TCP",

"description": "Transmission Control Protocol"

},

{

"@type": "Thing",

"name": "UDP",

"description": "User Datagram Protocol"

}

],

"isPartOf": {

"@type": "Blog",

"name": "bTrade Technology Blog",

"url": "https://www.btrade.com/blog"

}

}

{</script>

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is the fastest protocol for transferring large files?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Accelerated File Transfer Protocol (AFTP) is engineered specifically for high-speed bulk data movement. Built on UDP rather than TCP, it intelligently utilizes up to 100% of available network bandwidth and maintains consistent throughput regardless of file size or geographic distance, making it significantly faster than traditional protocols for large file transfers."

}

},

{

"@type": "Question",

"name": "Why can't SFTP and HTTPS handle large file transfers efficiently?",

"acceptedAnswer": {

"@type": "Answer",

"text": "SFTP and HTTPS are built on TCP, which imposes fundamental constraints through windowing and latency limitations. These protocols cannot utilize available network bandwidth efficiently when transferring very large datasets over long distances, resulting in slow performance, timeouts, and failures that make them unsuitable for enterprise-scale bulk data movement."

}

},

{

"@type": "Question",

"name": "How does accelerated file transfer handle network interruptions?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Accelerated file transfer software includes automatic checkpoint restart functionality. When network disruptions occur, the system automatically resumes from the last successful checkpoint without requiring manual intervention or restarting the entire transfer, which is especially valuable when moving terabyte-scale datasets."

}

},

{

"@type": "Question",

"name": "What industries benefit most from accelerated file transfer technology?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Media production companies, banking institutions, eDiscovery operations, and manufacturing environments all benefit significantly. Media companies transfer massive video files worldwide, banks move large datasets for compliance and regulatory requirements, and eDiscovery processes handle enormous volumes of documents and records across global jurisdictions."

}

},

{

"@type": "Question",

"name": "How much faster is accelerated file transfer compared to traditional methods?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Organizations using accelerated file transfer technology have achieved up to 100 times faster delivery compared to traditional SFTP and HTTPS methods. Banking clients reduced transfer times from days to hours, while media companies gained predictable delivery timelines for production assets that previously experienced constant delays and failures."

}

}

]

}